SLMs vs LLMs: 7 Brutal Truths About Cost and Speed

Understanding the exact difference between SLMs vs LLMs is your most critical architectural choice. Large models are smart but expensive. Small models are fast and incredibly cheap. You must choose wisely.

If you build autonomous systems, you cannot guess. You need hard facts. The SLMs vs LLMs debate is not just academic. It dictates your profit margins. It dictates your user experience. It dictates your data privacy.

Stop wasting money on bad API setups. Stop paying for massive models to do simple jobs. This guide strips away the hype. Here is the exact breakdown of how to deploy AI correctly.

The SLMs vs LLMs Baseline

Let us define our terms. What are we actually comparing in the SLMs vs LLMs debate?

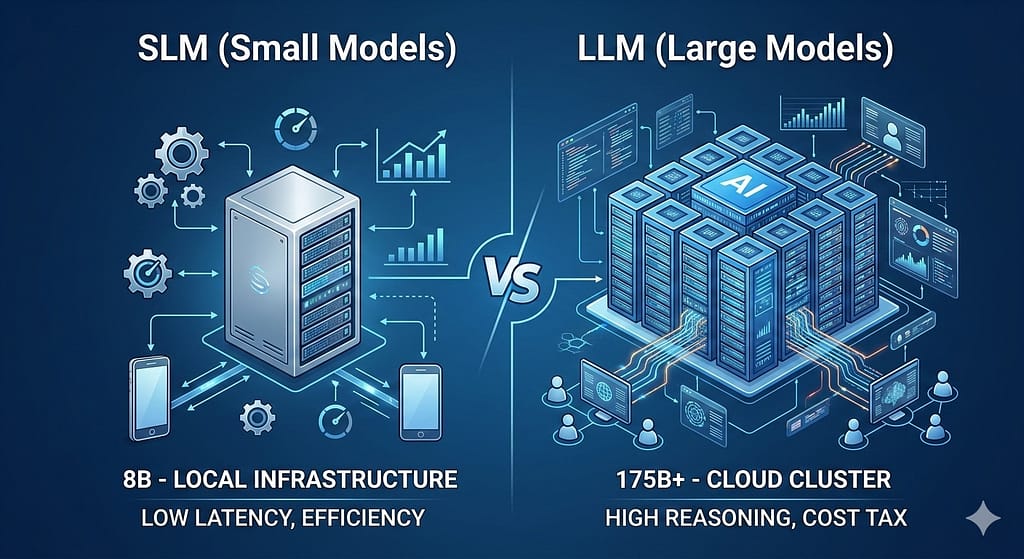

LLMs are Large Language Models. Think of GPT-4 or Claude 3.5. They have hundreds of billions of parameters. They know almost everything. They can write poetry. They can code complex software. But they are heavy. They require massive data centers to run.

SLMs are Small Language Models. Think of Llama 3 8B or Phi-3. They have under 15 billion parameters. They do not know everything. But they are highly focused. They run on local machines. They execute specific tasks flawlessly.

When you evaluate SLMs vs LLMs, you are trading broad knowledge for raw efficiency.

Truth 1: API Costs Destroy Margins

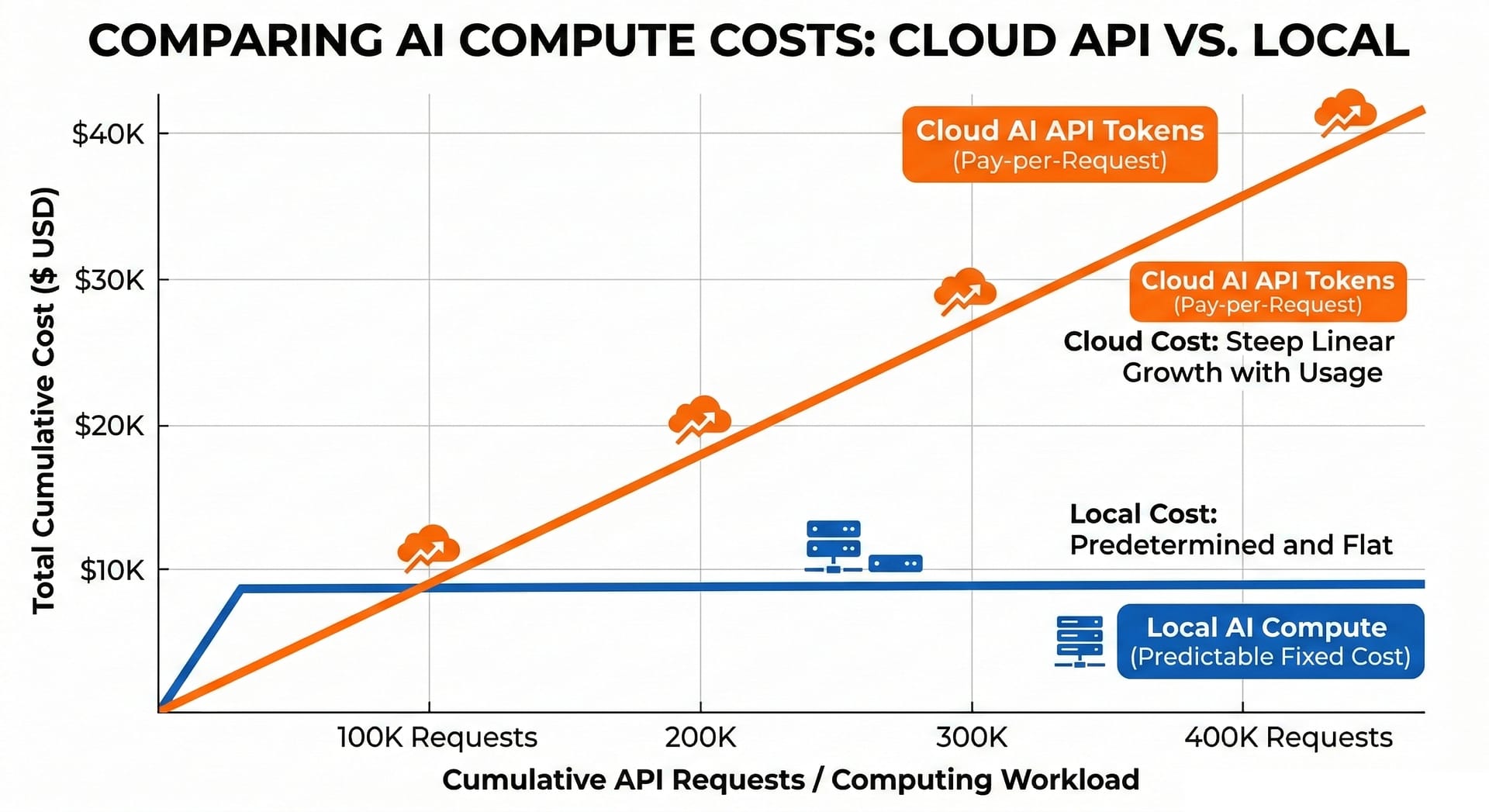

Let us talk about unit economics. The cost difference in SLMs vs LLMs is staggering. Frontier models charge a massive premium. Every single API call eats into your profit margin.

This is fatal for a high-volume micro-SaaS platform. You cannot scale if every user query costs you money. You will go bankrupt paying the “cloud tax.”

Small Language Models change the game entirely. You deploy them on your own hardware. You pay for electricity and fixed compute. You do not pay per token. The operational cost drops by up to 90 percent.

Truth 2: Speed is a Feature

Users hate waiting. Speed is another critical factor in SLMs vs LLMs. Large models often take seconds to generate a reply. This delay ruins real-time chat experiences. It frustrates users.

SLMs answer in milliseconds. They are highly optimized for specific tasks. You sacrifice broad world knowledge. But you gain raw execution speed.

For tasks like JSON extraction, an SLM is perfect. It does one thing extremely well. It does it instantly.

| Metric | Small Models (SLMs) | Large Models (LLMs) |

|---|---|---|

| Latency | Under 100 milliseconds | 1 to 3+ seconds |

| Hardware | Local GPU (16GB VRAM) | Massive Cloud Clusters |

| Best Use Case | Data routing, intent sorting | Complex logic, coding |

Truth 3: Data Privacy Matters

Looking at SLMs vs LLMs for privacy reveals a clear winner. Sending sensitive data to the cloud is risky. Enterprise clients demand strict data privacy. When you use public APIs, you lose control of the data flow. You expose your clients to risk.

Running an SLM locally solves this entirely. The data never leaves your server. This makes SOC2 and HIPAA compliance much easier.

If privacy is your top priority, you must use local models. You have full custody of the data. No third party ever sees your prompts.

Truth 4: The Hardware Reality

Let us examine the hardware requirements for SLMs vs LLMs. Running an LLM locally is nearly impossible for small teams. You need massive GPU clusters. You need hundreds of gigabytes of VRAM. It costs hundreds of thousands of dollars.

SLMs are democratic. You can run an 8B parameter model on a standard commercial rig. A machine with 16GB or 24GB of VRAM is enough. This hardware is cheap. It is accessible.

You can buy the hardware once. Then you own your AI infrastructure forever.

Truth 5: Hybrid Routing Wins

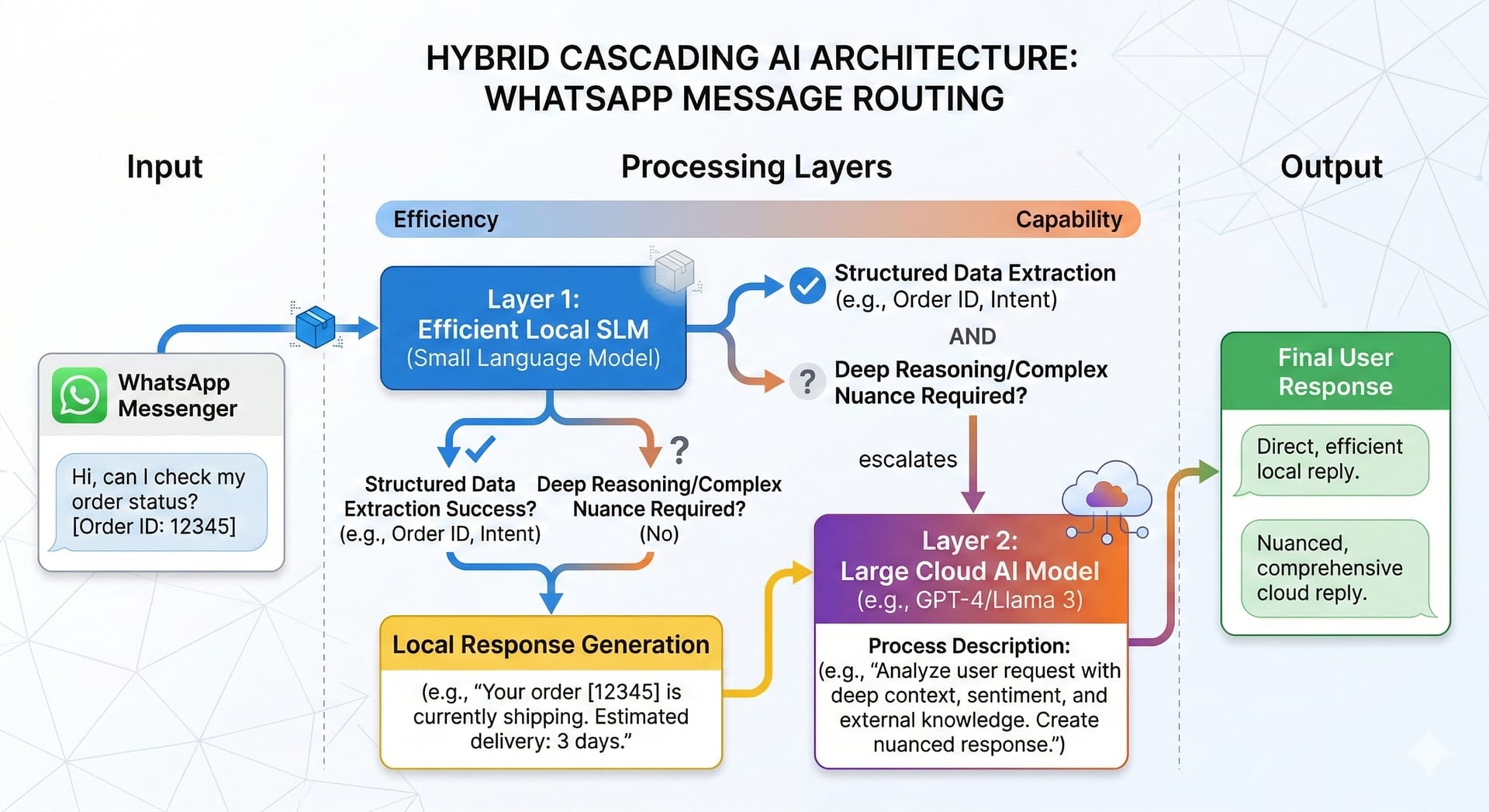

The best systems use both. Routing SLMs vs LLMs is an art. You need a hybrid cascading architecture.

Use an SLM for frontline triage. Let it handle basic intent routing. It qualifies the lead instantly. It extracts the necessary entities.

If the user asks a complex question, escalate it. Route that specific query to a heavier LLM. You can build this routing logic easily. Tools like n8n make this simple.

Read the official n8n documentation on routing to see how. You can also review our internal strategies on Context-Aware AI for Business. This hybrid approach protects your margins.

Truth 6: Real Estate Use Cases

Let us look at a real-world example. Imagine building a WhatsApp-first AI system. You want to automate lead qualification for real estate brokers.

If you map every incoming message to a frontier model, you lose money. Buyers send spam. They say “hello.” They ask “is this available?”

A smart broker uses an SLM first. The small model extracts the budget. It extracts the location preference. It runs fast. It costs nothing. Only serious negotiations get passed to the expensive LLM. This is how you build a profitable agent factory.

Truth 7: Open Source Domination

Open source changes the SLMs vs LLMs landscape daily. The community is moving fast. Small models are getting smarter every week.

You can download these models for free. You can fine-tune them on your own data. You can make an SLM act like a senior broker. You just need good training data.

Proprietary LLMs lock you into an ecosystem. Open source SLMs give you freedom. They give you leverage. They give you total control over your business logic.

Frequently Asked Questions

What is the core difference in SLMs vs LLMs?

The core difference is scale. Large models have massive parameter counts for complex reasoning. Small models are optimized for speed, low cost, and specific tasks. Small models run locally. Large models require the cloud.

Which is cheaper to run for a micro-SaaS?

In the battle of SLMs vs LLMs, small models are significantly cheaper. You pay for fixed server compute rather than a per-token API fee. This drops operational costs by up to 90 percent.

Can I run small models locally?

Yes. You can run models like Llama 3 on standard commercial hardware. This provides absolute data privacy and near-zero latency. It is the best way to secure your data.

When should I pay for a large model?

Pay for an LLM only when the task requires multi-step reasoning, deep logic, or writing complex code. Never use an LLM for simple data extraction or basic intent routing. Use the right tool for the job.