Sustainable AI: 7 Brutal Truths on Enterprise Compute Costs

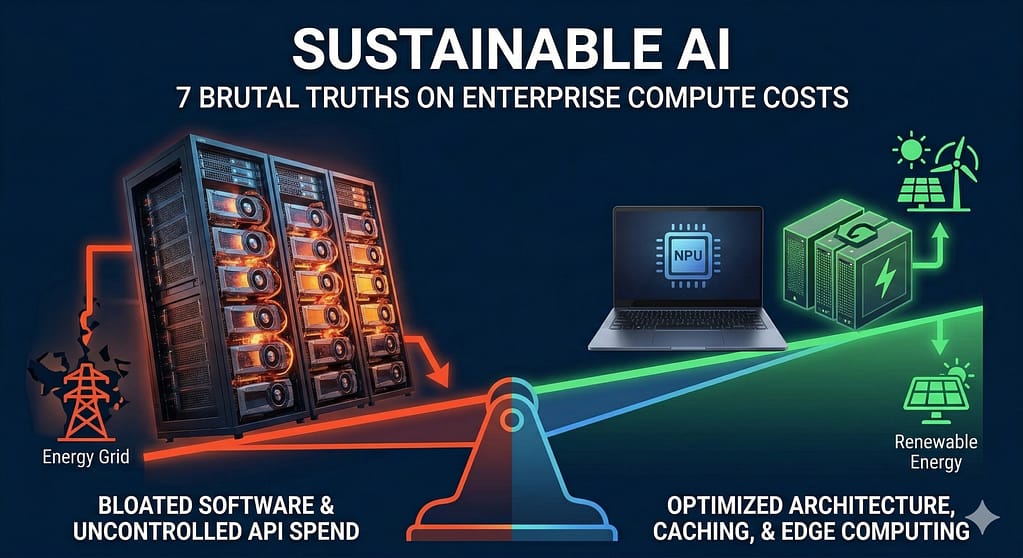

Sustainable AI is no longer a corporate public relations talking point. It is a strict mathematical baseline for survival. If you ignore the energy economics of your systems, you will go bankrupt.

Compute is finite. Data centers are hitting hard physical limits on the power grid. Energy costs are rising fast. These physical constraints are destroying the profit margins of bloated software.

You cannot deploy massive language models for every minor task. The cloud tax is too high. You need a ruthless strategy to optimize tokens, caching, and hardware. This guide strips away the greenwashing. Here is the exact operational framework you need.

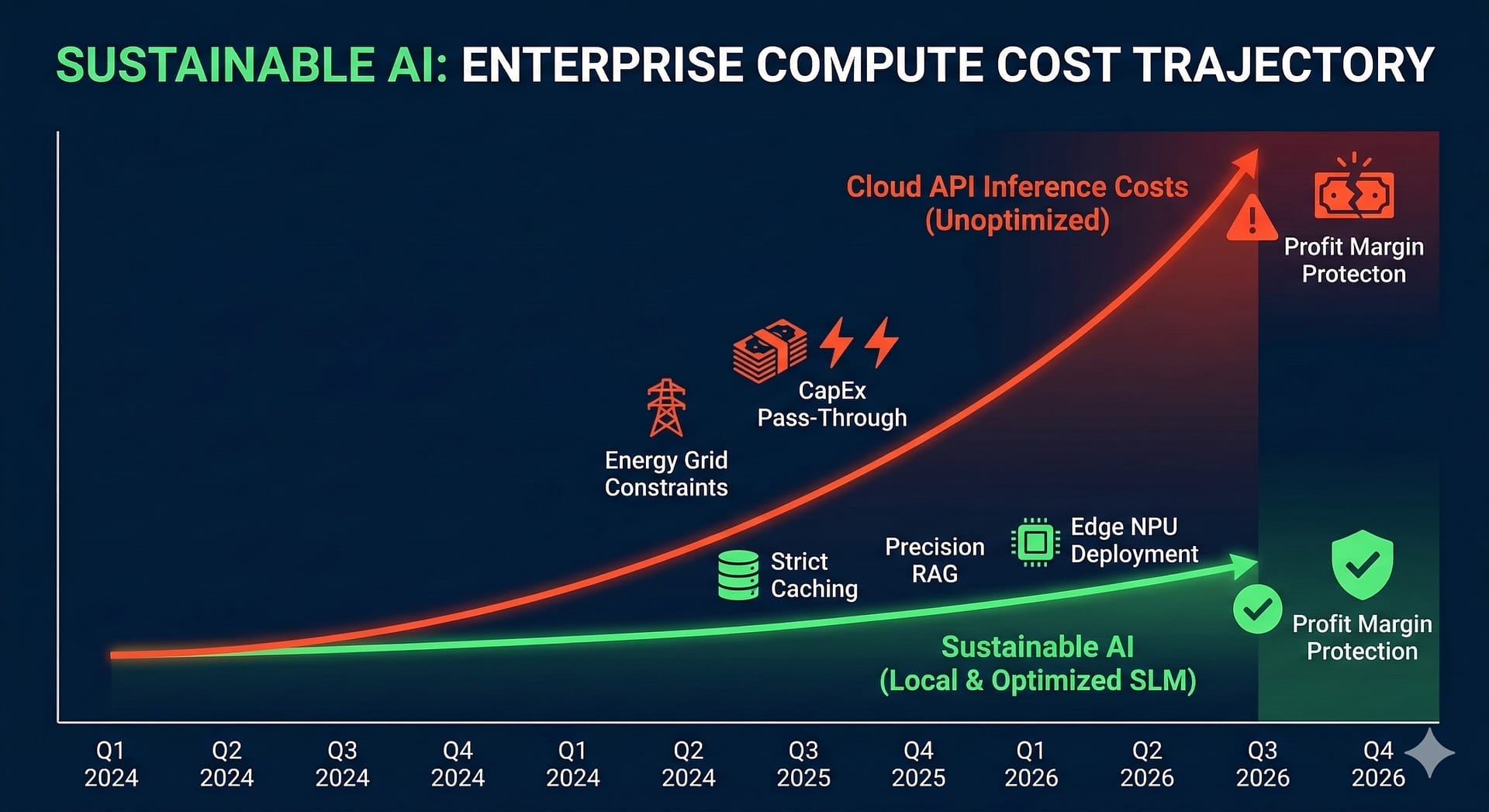

Truth 1: The Power Grid is Starving

Look at the physical infrastructure. Massive GPU clusters require staggering amounts of electricity. A single rack of NVIDIA H100s draws more power than an entire neighborhood.

Data center operators cannot buy enough electricity. They face heavy thermal throttling. Liquid cooling is now mandatory. These are expensive, hard physical limits. The energy grid cannot scale as fast as software demand.

This directly impacts your business. When data centers pay more for power, your API costs increase. A lack of sustainable AI practices at the hardware level translates to higher inference costs for you.

Truth 2: CapEx Defines Your API Bill

You must understand capital expenditures (CapEx). Frontier AI companies spend billions on compute clusters. They pass this cost to you.

Every token you generate carries a massive energy tax. If your application relies entirely on API calls to massive generalist models, your margins are dead. You are paying for the energy required to cool thousands of GPUs.

You cannot build a profitable micro-SaaS with these unit economics. You must actively engineer systems that require less power. This is the core principle of operational sustainable AI.

Truth 3: Sloppy RAG Burns Cash

Retrieval-Augmented Generation (RAG) is standard practice. But most developers build sloppy pipelines. They retrieve too much context.

If your vector database pulls 50 text chunks when it only needs three, you are wasting compute. You are forcing the LLM to process thousands of useless tokens. This drives up latency. It burns unnecessary electricity. It drains your wallet.

You must optimize your embedding models. Use precise chunking strategies. Rank your search results before feeding them to the prompt. Efficient RAG is the lowest-hanging fruit in sustainable AI deployment.

For deeper technical workflows on this, read our guide on Production-Ready RAG Architecture.

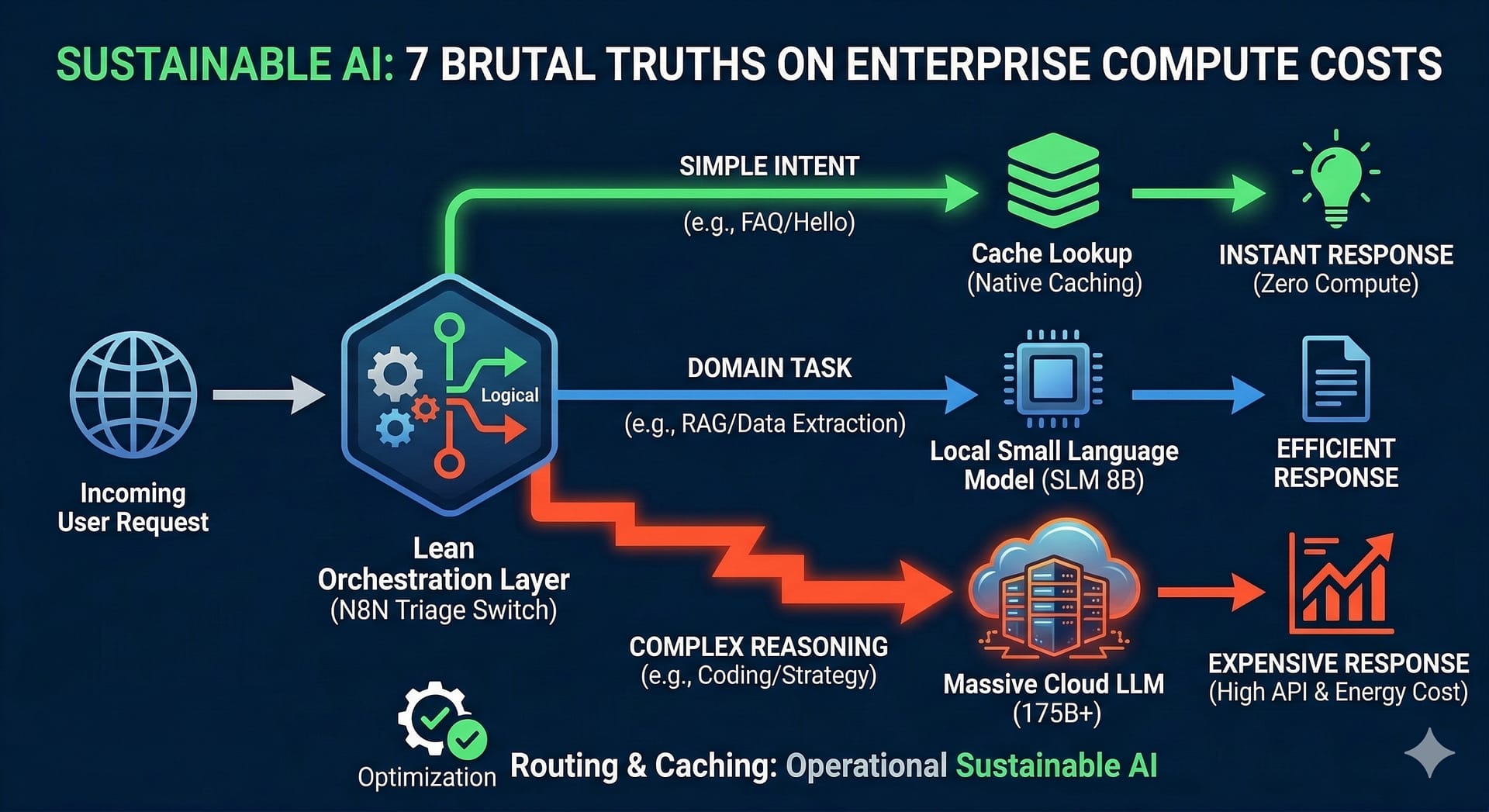

Truth 4: Caching is Mandatory

Never compute the same answer twice. This is an unforgivable engineering sin. Yet, most automated agents run identical prompts thousands of times a day.

You must implement strict prompt caching. When a user asks a common question, serve the answer from a static cache. Bypass the LLM entirely. This drops the compute cost of that transaction to absolute zero.

Modern platforms like Anthropic now offer native prompt caching. Use it. It cuts inference costs by up to 90 percent. It reduces your energy footprint instantly. This is how you enforce sustainable AI at the API layer.

Truth 5: Hardware Efficiency Metrics

Stop looking at raw parameter counts. Start looking at tokens-per-watt. This is the only metric that dictates long-term profitability.

If a smaller model can execute your specific task using ten percent of the power, you must use it. Optimization is a hard requirement.

Review the comparison below to understand the stark difference in energy economics.

| Model Architecture | Power Draw (Est. per Query) | Economic Impact | Best Use Case |

|---|---|---|---|

| Massive Cloud LLM (175B+) | Extremely High (Server Rack Level) | Destroys margins at high volume. | Deep reasoning, code generation. |

| Local SLM (8B) | Low (Single Workstation GPU) | Protects margins. Highly scalable. | Intent routing, JSON extraction. |

| Cached Response | Near Zero (Basic Database Read) | Maximum profitability. | FAQ answering, static lookups. |

Truth 6: Build Lean Architecture

You need a cascading architecture. Do not send every request to the largest model. You must triage incoming data.

Use simple, low-power classifiers to sort user intent. If a user says “hello”, a lightweight script should reply. If they ask a specific product question, a local SLM should handle it. Only escalate complex logical problems to heavy frontier models.

This requires orchestration. Connect your webhooks to an automation platform like n8n. Build logical switches. Read the n8n switch node documentation to see how to split traffic based on complexity.

By routing effectively, you drastically reduce your energy reliance. Sustainable AI is about using the exact amount of compute required for the job. Nothing more.

Truth 7: The Edge Computing Shift

The future is local. We are moving compute away from centralized data centers. We are pushing it to edge devices.

Smartphones and laptops now have dedicated neural processing units (NPUs). They can run optimized models directly on the hardware. This bypasses the cloud entirely.

If you build software, figure out how to leverage the user’s hardware. Running inference on a battery-powered laptop is the ultimate form of sustainable AI. It costs you nothing. It guarantees user privacy. It scales infinitely without breaking your API budget.

Frequently Asked Questions

Why is sustainable AI an economic issue?

Massive language models require vast amounts of electricity. Power grids are constrained. This constraint drives up data center operational costs. Providers pass those costs directly to developers via high API token pricing. Sustainable AI practices mitigate these exact costs.

How can I make my AI workflows cheaper?

Implement strict prompt caching. Stop processing identical queries. Use small, specialized local models for basic routing and data extraction. Only pay for large cloud models when deep reasoning is strictly necessary.

Does RAG waste compute power?

Yes, if designed poorly. Retrieving too much irrelevant context forces the model to process useless tokens. This increases latency and wastes electricity. Optimize your embedding chunks to be as precise as possible.